I've spent a lot of time over the past few years in conversations about AI. With boards, with investors, with commercial teams. The question is almost always the same: “When are we going to have AI doing this for us?”

My answer is always the same too: sooner than you think, but not in the way you’re imagining.

Because here’s what I’ve seen, repeatedly, across our industry: businesses invest in AI capability and then wonder why it doesn’t deliver. The models are solid. The use cases are well-defined. And yet the results stay stuck at pilot stage, never quite reaching customers at scale.

The problem is almost never the model. It’s what’s holding it up.

Why AI Readiness Is Harder Than It Looks

AI models are, in many ways, the easy part. The hard part is building a platform that can actually feed them reliably, run them at speed, keep them accurate over time and measure real impact.

Most eCommerce platforms were built in a different era - designed to handle transactions reliably and report on performance after the fact. That simply doesn’t work for AI-powered commerce, which demands real-time data, making decisions on the fly, and infrastructure that can handle the pressure without slowing down.

When you try to layer AI on top of a platform that wasn’t built for it, you end up with something that looks impressive in a demo and disappoints in production.

And honestly, it’s the challenge I hear about most from my colleagues right now.

It Starts With Owning Your Customer Data

Before we talk about models, we need to talk about data - specifically, how fragmented most organisations’ customer data actually is.

A customer might find you through a paid social ad, browse on mobile, and complete their purchase on desktop three days later. In many platforms, those are three disconnected records. There’s no unified identity. No continuous thread.

That fragmentation is fatal for AI. Recommendation engines, churn prediction models, real-time personalisation - they all depend on a coherent picture of who the customer is and what they’ve done. Without it, you’re feeding your models incomplete inputs and wondering why the outputs aren’t reliable.

The technical answer is stitching those separate records into one: connecting cookies, device IDs, email addresses, and account logins into a single persistent customer profile. It sounds straightforward, but in practice it’s a conscious design choice - not something you can fix after the fact.

McKinsey’s research puts the commercial stakes clearly: 71% of consumers expect personalised experiences, and companies that deliver them effectively can grow revenue from personalisation by up to 40%. But you can’t personalise what you can’t see.

Going Real-time Means Rethinking Your Entire Data Flow

One of the shifts I think about most is the move from batch analytics to real-time commerce. It sounds like an upgrade. It’s actually a different way of thinking about your entire data infrastructure.

Traditional pipelines were designed to collect data and process it later. That model works fine for overnight reports. It doesn’t work when a customer is actively browsing your site and your recommendation engine is still serving signals from yesterday.

The architecture we’re moving towards treats every click, search, or cart update as a signal the whole platform responds to immediately. Kafka and similar streaming platforms are the backbone of this approach - the connective tissue that keeps every part of the platform in sync.

In plain terms, your platform stops being a system of record and starts becoming a system of response. Customer behaviour becomes a live input, not a historical report.

When your platform responds in the moment, customers notice - and so does your revenue. But it requires intentional infrastructure investment. It doesn’t happen by accident.

The Feature Store: Solving a Problem Most Teams Don’t Know They Have

Here’s a technical detail that I think deserves more attention in boardroom conversations: the gap between how a model behaves in testing versus how it behaves in the real world.

It’s what happens when the data a model was trained on differs from the data it receives when it’s live in production. The model behaves one way in testing and another in reality - and the gap is often invisible until you start digging into why performance has drifted.

The solution is a feature store: a centralised system that manages the signals used by machine learning models, ensuring consistency between training and production environments. Instead of each team independently calculating their own version of customer signals - recency, category affinity, predicted lifetime value - there’s a single shared source that everyone draws from.

It won’t win you any headlines. But in my experience, it’s one of the biggest differentiators between AI that scales and AI that doesn’t.

Building the Stack That Makes It All Possible

A unified data layer and a feature store set the foundation - but they still need a modern infrastructure stack to run on. And this is where I see a lot of organisations underestimate the complexity involved.

AI-driven commerce isn’t just about processing large volumes of data. It’s about doing it while responding to live customer interactions within milliseconds. That combination of scale and speed rules out most legacy architectures and points clearly towards modern infrastructure where each piece can grow or shrink on its own, without dragging the rest down with it.

In practice, the stack typically looks something like this:

At the storage layer, object platforms like Amazon S3 or Azure Data Lake hold the raw datasets used for analytics and model training - your historical foundation. Sitting alongside them, data warehouses such as Snowflake, BigQuery, or Redshift handle the large-scale analytical queries that power business reporting and experimentation.

For real-time processing, streaming infrastructure - Kafka being the most widely adopted - distributes behavioural events across services the moment they happen. And for the fast-access data that AI models need during live inference, low-latency databases like Redis or DynamoDB store session data and real-time customer features fast enough that the customer never waits.

Then there’s model serving: deploying trained models as microservices using frameworks like TensorFlow Serving, TorchServe, or KServe. These connect directly to your storefront or marketing tools - making AI a live capability woven into the platform rather than a separate tool running alongside it.

The architectural principle tying all of this together is independent scalability. When traffic spikes during a flash sale or seasonal peak, each layer flexes to meet demand without dragging down the others. Your AI workloads keep running. Your recommendations keep serving. Your customers don’t notice a thing.

But a great stack alone doesn’t keep your models accurate. That takes something more.

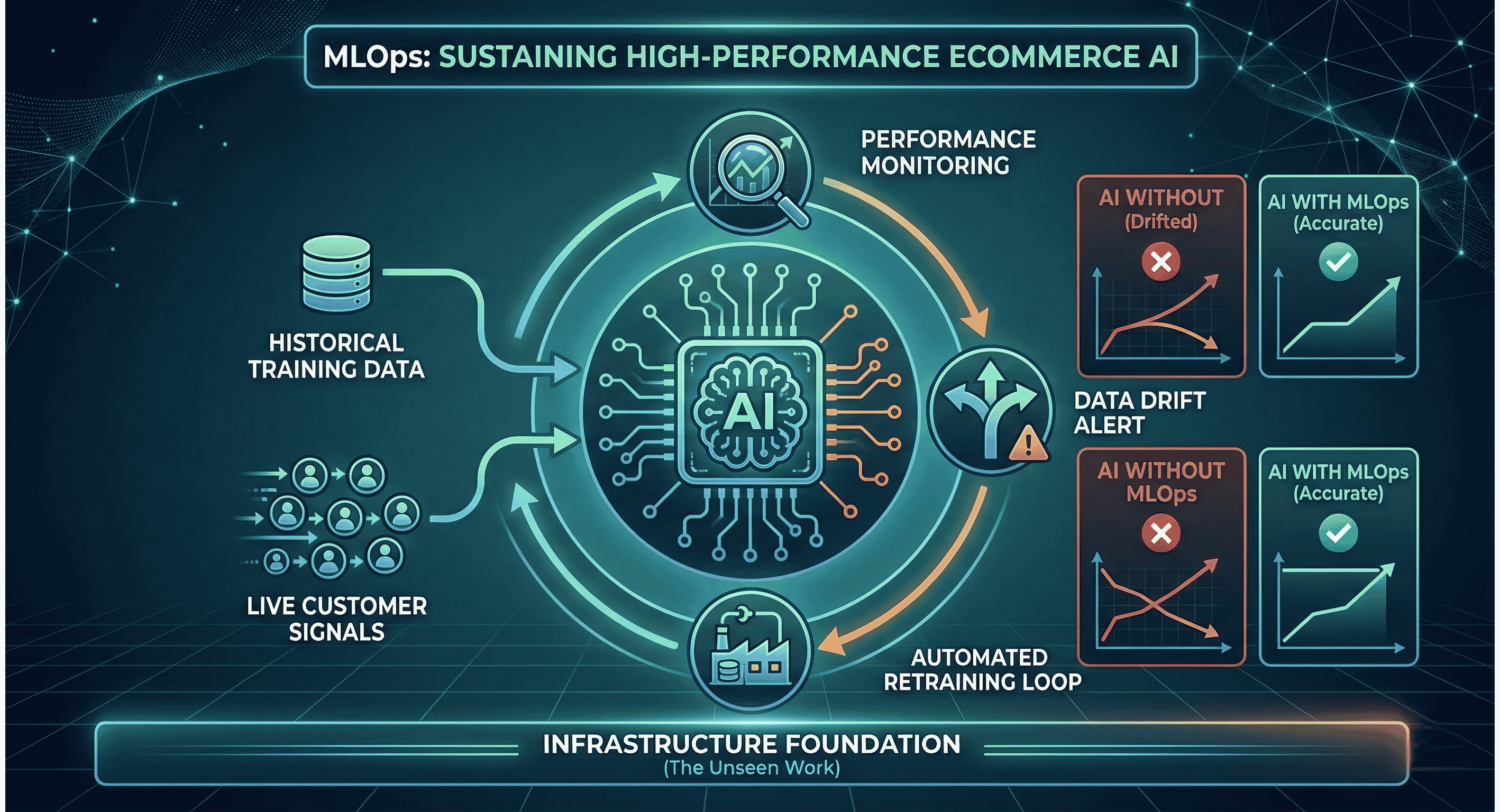

MLOps: The Discipline That Keeps AI Honest

Deploying a model is a milestone. Maintaining it is the real work.

Customer behaviour shifts. Market conditions change. A model trained six months ago may have been accurate then and quietly wrong now - not because anything broke, but because the world it was trained on no longer matches the world it’s operating in. This is called data drift, and left undetected, it quietly undoes the value you worked hard to build.

This is why MLOps - the operational discipline around machine learning systems - is something I’d put on the strategic agenda of any CTO in eCommerce. It covers automated model monitoring, retraining pipelines, performance benchmarking, and a careful process for testing changes on a small slice of users before rolling them out to everyone.

Google’s ML architecture guidance is clear on this: building in automated checks and ongoing monitoring isn’t optional - it’s what keeps the whole thing honest.

The businesses that treat MLOps as a core engineering capability, rather than an afterthought, are the ones whose AI investment pays back. The others are constantly firefighting.

Privacy As a Design Principle

I want to address something that sometimes gets treated as a compliance checkbox rather than a strategic question: privacy.

Salesforce research found that 73% of customers expect companies to understand their individual needs - but 71% say they’re more protective of their personal data than ever before. That’s not a contradiction. It’s a signal about what trust actually looks like in 2026.

From an architectural perspective, this means privacy can’t be retrofitted. Consent management, anonymised data that can’t be traced back to a specific person, strict data access controls, GDPR-compliant data handling - these need to be embedded in the foundation, not added on top.

The forward-looking view is that privacy-by-design isn’t just risk management. It’s a competitive advantage. Customers who trust you share more signal. More signal means better models. Better models mean more relevant experiences. It’s a virtuous cycle - but only if you’ve built the foundation to support it.

Where I Think This Is All Heading

The most important shift I see coming in eCommerce AI isn’t in the models themselves - it’s in the infrastructure around them becoming truly real-time, end-to-end.

Right now, even well-architected platforms have latency at various points: data collection, feature computation, model inference, response delivery. Over the next few years, I expect the most competitive platforms to close those gaps significantly, moving towards architectures where intelligent decisions happen within milliseconds at every customer touchpoint.

That means the infrastructure decisions being made today - how data pipelines are designed, how models are deployed, how features are managed - will directly determine competitive positioning in three to five years’ time.

Companies that treat AI as an add-on will keep hitting the same wall. The ones that treat it as an architectural discipline are the ones I expect to pull ahead.

The Strategic Takeaway

If there’s one thing I’d want you to leave with, it’s this:

Before asking what AI should do for your business, ask whether your platform is ready to support it.

That means four things in practice:

A unified customer data layer with real identity resolution

Event streaming that makes behaviour a live input, not a historical one

A feature store and modern infrastructure stack that ensure model consistency and scalability

MLOps practices that keep AI accurate and reliable over time

Get those foundations right, and AI becomes something that actually gets better over time - one that improves with every customer interaction, adapts to change, and delivers personalisation that actually earns customer trust.

Skip them, and you’ll keep running impressive pilots that never quite scale.

That, in my view, is the strategic question every CTO in eCommerce should be asking right now.

Latest posts

April’s Trends: Treatonomics is No Fool’s Recipe

This month isn’t about being fooled by the next massive industry panic or buying into stressful, hyper-complex tech trends. Low-friction joy? Welcome to the era of Treatonomics.

Growth Hacking Series: Your Post-Purchase Automation Is Probably Too Generic. Here's How to Fix It

Most eCommerce teams have post-purchase automation. The honest question is whether it's actually doing anything useful.A lot of those workflows were set up once, never really revisited, and now just quietly run in the background, triggering the same follow-up email whether someone spent $12 or $1,200. A customer buying a $15 water bottle and a customer bu...

Conversational AI in Ecommerce: Could Your Chatbot Become Your Most Profitable Sales Channel?

When I think about it, eCommerce hasn't really changed its fundamental UX in ten years. A customer lands on a homepage. Clicks into a category. Scrolls through a grid of products. Adds something to a cart. Checks out. Maybe.It's a catalogue model. A very fast, very pretty, very data gathery digital catalogue, sure. But a catalogue is still a catalogu...